From cat videos to Artemis II: the rise of space laser communications

When people talk about the future of space, they usually start with rockets. Getting enough data back to Earth, fast enough to matter, is the next real bottleneck.

Issue 165. Subscribers: 84 842

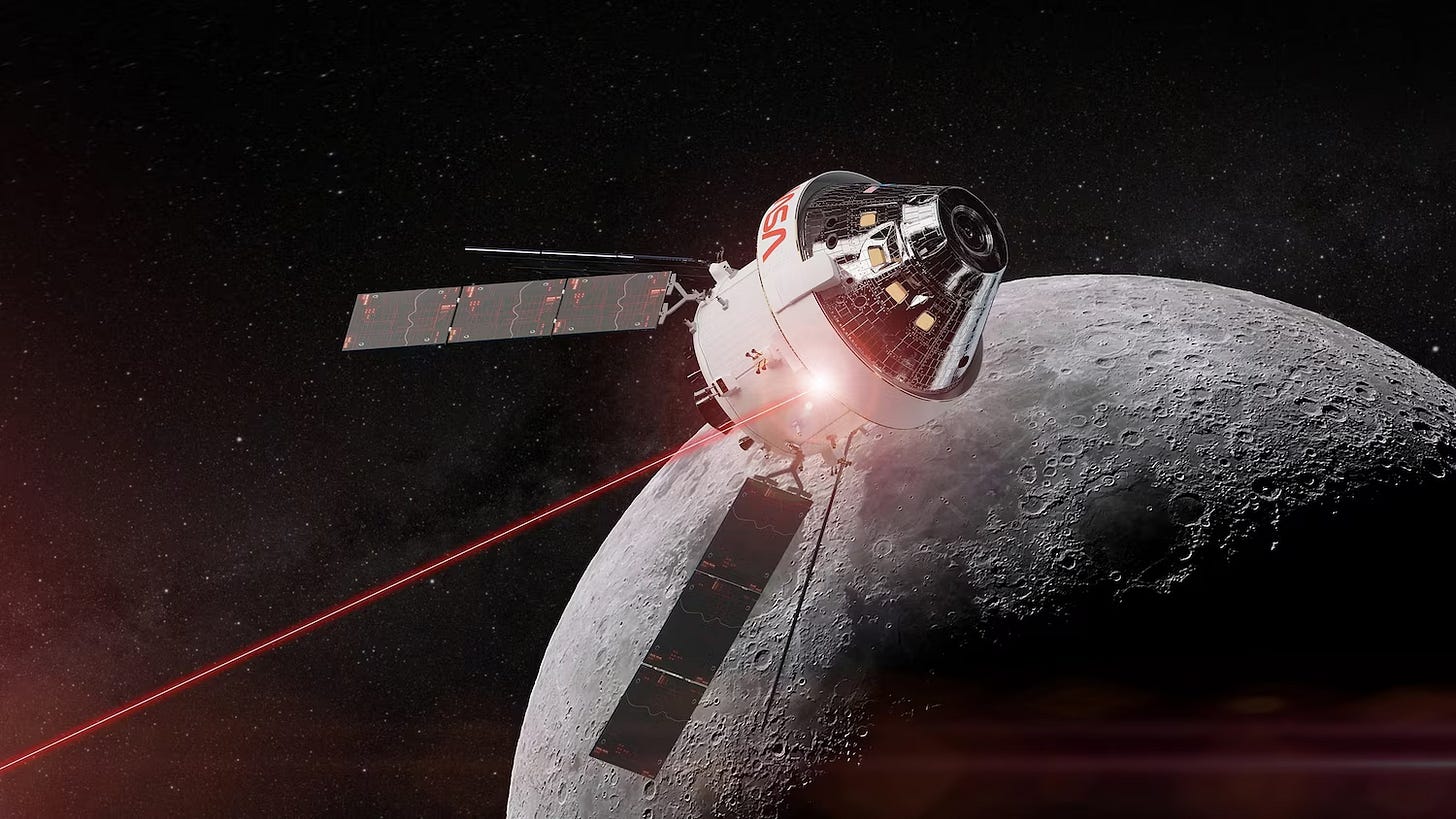

On 1st April 2026, NASA is set to launch Artemis II, the first crewed lunar mission since Apollo 17. One reason this mission matters beyond the obvious symbolism is that Orion will carry the Orion Artemis II Optical Communications System (O2O). Astronauts flying around the Moon are expected to send back higher-quality images and video by laser. This will enable sending up to 100 times more video, high-resolution images, flight plans, and other mission data than with comparable radio networks.

Why light can beat radio

That sounds futuristic, but the core idea is simple. Optical communication means sending data by light through free space instead of sending it by radio waves. On Earth, we already trust light with our most important communications: fiber-optic cables carry the modern internet. A light wave oscillates at enormously higher frequencies than radio or electrical signals in copper. In practical terms, that gives engineers a much bigger “canvas” to encode information. Second, it loses less quality over distance. Glass fiber is very good at guiding light with low loss, so you can move much more data farther before needing cleanup and amplification.

Space laser communications is the wireless cousin of that idea. Instead of pushing bits through glass, one aims a very narrow beam of light from one terminal to another: you gain speed and efficiency, but only if you can point with extraordinary precision.

Optical communication in space is not a brand-new idea. Governments and agencies have been working on it for years, and Europe already turned it into an operational relay service through the European Data Relay System (EDRS), commercialized by TESAT-Spacecom, an Airbus subsidiary, as the Space Data Highway. Airbus says the system has now been in service for more than eight years, with transfer rates up to 1.8 Gbps, daily volumes up to 40 TB, and more than 80,000 successful laser connections over its first eight years of routine operations. In other words, this is no longer just laboratory theater. It is already part of the real infrastructure.

NASA, meanwhile, has been pushing the frontier in another direction: peak performance and deep-space distance. The most famous recent result in near-Earth space came from TBIRD, the TeraByte InfraRed Delivery mission. In 2023 and 2024, NASA and MIT Lincoln Laboratory showed that a 6U CubeSat could downlink at 200 Gbps and transfer up to 4.8 terabytes in a single five-minute pass from low Earth orbit to the ground. NASA explicitly described this as the fastest downlink ever achieved from space. It is more than a thousand times faster than a typical American household connection.

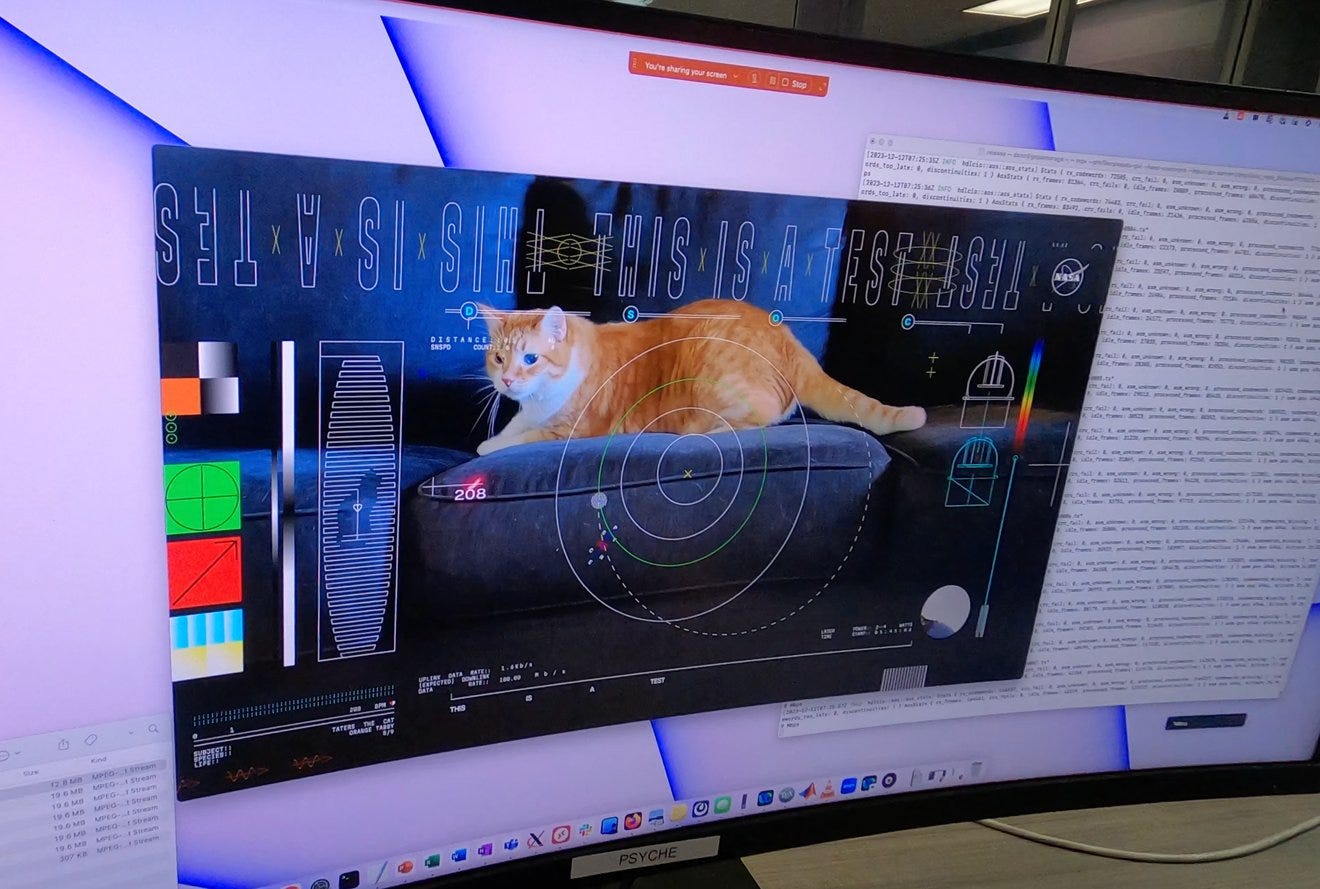

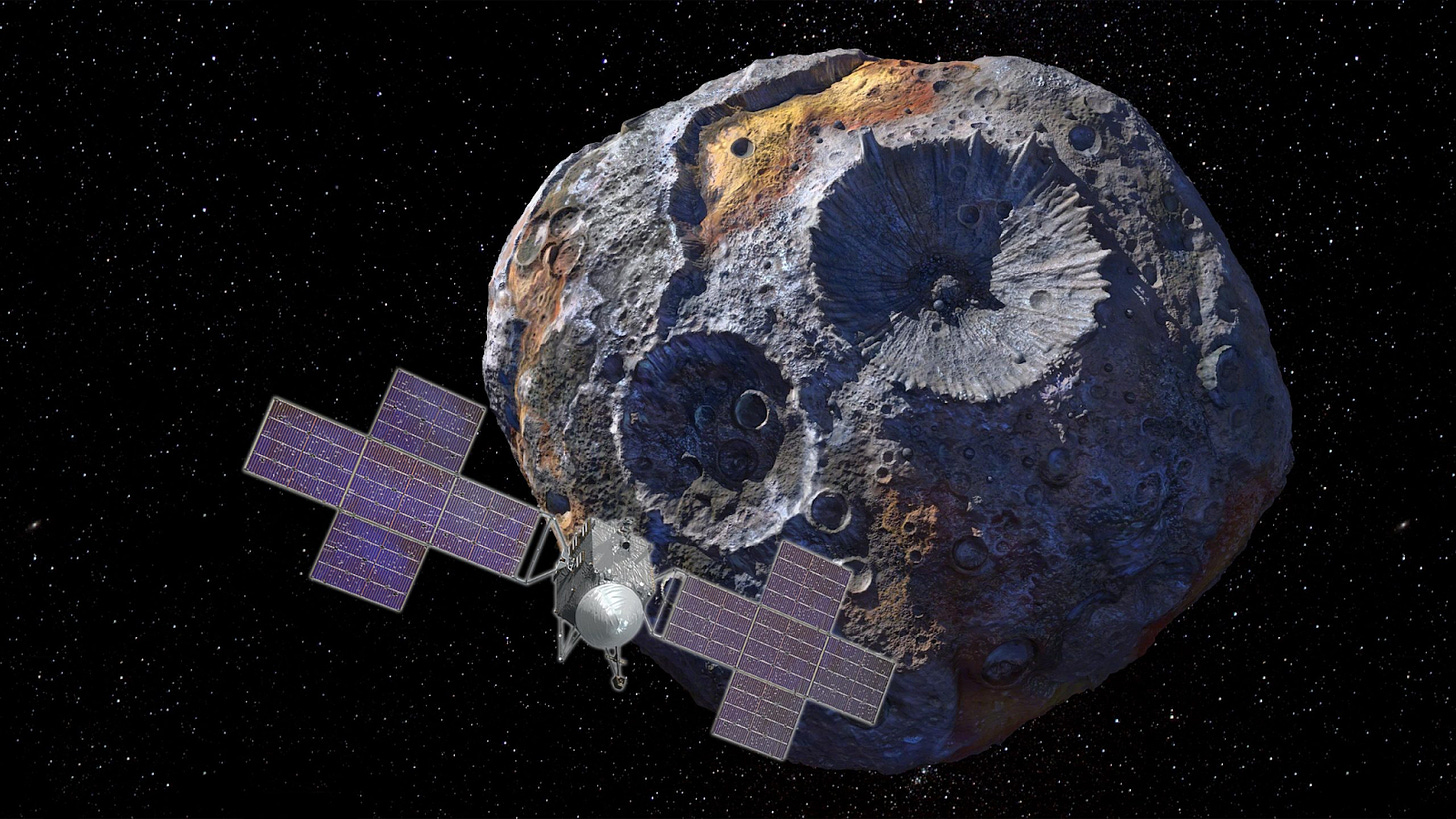

Deep space has been moving too. NASA’s Deep Space Optical Communications experiment, flying with the Psyche mission, first became famous for sending back a short ultra-high-definition test video of a cat named Taters chasing a laser pointer from nearly 19 million miles away at 267 Mbps. That was not just a PR event, though it was a very good one. It showed that deep-space laser links could carry media-rich traffic, not just sparse engineering telemetry. Since then, NASA has gone much further: they later sent a laser signal to Psyche at about 290 million miles away, roughly the distance between Earth and Mars when the planets are farthest apart. The point is no longer whether laser communication can work beyond the Earth-Moon system. The real question is how fast it becomes normal.

When satellites collect more than they can return

To see why this matters, it helps to step back from the headline numbers and look at the underlying problem. Space is developing a data bottleneck. For years, the industry’s public narrative centered on launch: cheaper access to orbit, more payloads. But once launch gets easier and sensors get better, another constraint moves to the front. Satellites can often collect more data than they can return.

In 2021, NASA said that just two missions, SWOT and NISAR, would together produce roughly 100 TB of data per day. And that is before adding the many commercial Earth-observation constellations now flying or being designed with higher resolution, more spectral bands, more revisit, and more onboard processing.

The bottleneck has several dimensions. The obvious one is bandwidth: how many bits can you send during a pass? But there is also time. A satellite in LEO may only see a particular ground station for a few minutes. In that short window, every second spent on acquisition, pointing, and protocol overhead is a second not spent delivering customer value. There is also latency: if an Earth-observation image has commercial value now, waiting tens of minutes or hours for the next ground contact degrades that value. And there is onboard buffering: if you keep generating data faster than you can empty storage, your spacecraft becomes partly a flying hard drive. This shapes mission economics, product design, and customers’ willingness to pay.

This is where optical communication starts to look less like a cool subsystem and more like a missing infrastructure layer. TBIRD demonstration again provides a vivid comparison. In one pass, from a CubeSat in a roughly 530 km sun-synchronous orbit, it delivered 4.8 TB error-free in five minutes at 200 Gbps. A TBIRD-type architecture does not solve everything, but it shows that the bottleneck is not ordained by physics: it is at least partly an infrastructure gap.

The Artemis II O2O planning slides offer a good, small-scale illustration of this economic logic. NASA’s figures show Orion subsystems generating roughly 250 GB of data in the first day of the mission and about 300 GB by the end. With continuous S-band alone, NASA estimated downlink would be limited to about 7 GB/day, leaving around 230 GB still onboard at landing. With one hour per day of 260 Mbps optical comms, that jumps to about 117 GB/day; with two hours per day, about 234 GB/day. That is not a cosmetic improvement. It determines whether data accumulates onboard for days or is largely cleared in near-real time.

Once you look at communications this way, the most interesting question becomes “what missions become possible when communications stop being the obvious constraint?” Near-live Earth observation is one answer. Faster relay between spacecraft is another. Airbus’ Space Data Highway already markets “almost real-time” data delivery as a service feature.

One of the most charming details in the field is that NASA used a cat video to help demonstrate deep-space optical communications. Taters, chasing a laser pointer, became the first UHD video streamed from deep space via laser. There is also a serious point hiding in the joke. If silly cats are now in the downlink, is it the right time for your regular YouTube video to be streamed from a space-based datacenter?

How optical communication becomes infrastructure

In our earlier piece, we argued that space-based data centers still face several decisive technical bottlenecks. Cheap, mass-efficient, energy-efficient data transfer to and from orbit is one of them. That said, the direction is unmistakable. Space does not need optical communications to become perfect overnight. It needs the first serious use cases to become routine enough to launch learning curves, supplier ecosystems, and deployment feedback loops.

That is now beginning to happen. Early demand is forming around the domains where the pain is clearest, and the value of better links is most evident. Earth observation is the one. Inter-satellite links inside large constellations is another. The right way to think about space laser communication today is as a technology moving through the most important phase of its life: from demos and niche operational programs toward broader infrastructure status. The architectures are not settled, the ground segment remains hard, and atmospheric effects remain real. But the field has crossed a line. If Artemis II really does send home cleaner video from the Moon, that will be a good image to remember, because it captures the shift in one scene: communications in space is no longer only about keeping contact. It is increasingly about moving enough data, fast enough, that orbit starts to behave less like a collection of isolated spacecraft and more like the beginning of a network.

Data downlink is definitely the core bottleneck in space data value chain. Optical communication is critical, but it may still struggle to keep up with speed of data generation.

Another important angle is pushing for more edge processing. Filtering, compressing, or extracting onboard data so we don't need to downlink raw data in the first place. This could potentially reduce bandwidth needs by orders of magnitude. But that puts software more in spotlight, and I feel like it may require broader mindset shift toward a more flexible, software-defined approach for compute infrastructure in space.

Curious to hear your take on this.